CRITER Weekly Roundup

Thursday, February 19

Hi everyone, and happy Thursday!

The short week got away from me a bit, so you’re receiving this newsletter a day later than usual. And CRITER will be on a break next week because I have a big date with Brandi Carlile down in Salt Lake City on Tuesday and will be short for time (worth it!).

Speaking of music, I’ve really been enjoying John Craigie’s latest album I Swam Here and DID YOU KNOW he’ll be at Treefort? I hope to see you there!

Okay, on to the AI!

AI and Higher Ed

Keep reading Ahhh...I really enjoyed this article in The Atlantic on not giving up on assigning reading in college literature classes:

None of this is to say that higher education faces no challenges in this moment. Universities’ resources are severely constrained; their research missions have been hobbled. In many institutions, academic freedom has been threatened or abrogated. These are bleak conditions, possibly terminal ones for the post–World War II dream of a research university. I agree with those who argue that universities find themselves in a state of crisis. But narratives about the “end of reading” strike me as self-inflicted, the manifestation of a collective depression. (In other words, to borrow from Thoreau, “We know not where we are.”) If we want students to keep reading books, faculty have the most important role to play—regardless of whatever new devices or platforms emerge to capture students’ attention. The reaction to declining reading skills, poor comprehension, and fragmented attention spans should not be to negotiate or compromise, but to double down on the cure.

Aligns with Luiza Jarovsky’s recent post on the rise of a “new humanism.”

Not so alpha Not higher ed, but here’s another investigative piece suggesting things at the AI-driven K12 “Alpha School” has some pretty big problems. Lots to consider here, but this excerpt in particular caught my eye:

The type of surveillance Alpha School uses on students is functionally identical to the type of surveillance used by Crossover, a platform that matches companies with remote workers. Crossover is also owned by Alpha School’s principal Joe Liemandt. Much like Alpha School, Crossover requires employees to install spyware on their computer that records their screens and tracks their mouse movements to make sure they are being productive. Previous reporting described Crossover as a “software sweatshop,” and that the company’s goal is to turn workers into “algorithms” and “human CPUs.”

Universities as ecosystems Nothing shocking or revolutionary in this piece in The Conversation, but the conclusion did catch my eye:

But another answer treats the university as something more than an output machine, acknowledging that the value of higher education lies partly in the ecosystem itself. This model assigns intrinsic value to the pipeline of opportunities through which novices become experts, the mentorship structures through which judgment and responsibility are cultivated, and the educational design that encourages productive struggle rather than optimizing it away. Here, what matters is not only whether knowledge and degrees are produced, but how they are produced and what kinds of people, capacities and communities are formed in the process. In this version, the university is meant to serve as no less than an ecosystem that reliably forms human expertise and judgment.

This op-ed in Inside Higher Ed makes a similar argument, suggesting that higher ed has to move away from measuring “artifacts” and toward interacting with and measuring process.

Not so fast Historian and Vice Dean of AI Initiatives at Columbia University Matthew Connelly wrote this fiery op-ed for the NYT:

Hoping to win recognition as leaders in A.I. or fearful of being left behind, more and more colleges and universities are eagerly partnering with A.I. companies, despite decades of evidence demonstrating the need to test education technology, which often fails to deliver measurable improvements in student learning. A.I. companies are increasingly exerting outsize influence over higher education and using these settings as training grounds to further their goal of creating artificial general intelligence (A.I. systems that can substitute for humans).

Mental health matters A new study of college-age students and their use of AI for mental health support suggests that impacts are shaped by context, usage patterns, and demographic factors:

The report emphasizes the need for “public health leaders, educators and policymakers to move beyond blanket approaches to youth and AI.” Instead, it calls for segment-informed strategies that “strengthen offline support, protect youth agency and ensure AI complements, rather than replaces human connection.”

AI and the Economy

Rent-a-Human? Wired has been publishing some real AI-related bangers lately, but this one on serving as a “rent-a-human” for AI agents felt especially dystopic (but thankfully not especially functional at the moment):

I never ended up hanging any posters or making any cash on RentAHuman during my two days of fruitless attempts. In the past, I’ve done gig work that sucked, but at least I was hired by a human to do actual tasks. At its core, RentAHuman is an extension of the circular AI hype machine, an ouroboros of eternal self-promotion and sketchy motivations. For now, the bots don’t seem to have what it takes to be my boss, even when it comes to gig work, and I’m absolutely OK with that.

Lawbots, nah The AI as Normal Technology guys have a (long, detailed) post out about how AI isn’t going to automate the legal services industry:

This excitement about AI comes at a time when legal services are expensive. Millions of individuals are priced out of legal assistance, while corporate legal fees are increasing steadily, with hourly rates for partners at large law firms now exceeding $2,300. Unsurprisingly, many observers see the potential for AI to make legal services more accessible by delivering outcomes at lower costs.

Our central claim is that advanced AI will not, by default, help consumers achieve their desired legal outcomes at lower costs. We examine the bottlenecks that stand between AI capability advances and the positive transformation of the practice of law that some envision. For AI to usher in a world of abundant legal services, the profession must address three bottlenecks: regulatory barriers, adversarial dynamics, and human involvement.

For policymakers The National Academies of Science, Engineering, and Medicine have released a new consensus study on AI and the Future of Work. The expansive and detailed report covers a lot of ground, but here’s an excerpt from the summary, in which they put the human “agency” back in agentic (see what I did there?):

There is great uncertainty regarding which specific AI capabilities will appear in the coming years and when. As a result, decision makers need to create policies that will be robust to a variety of possible future technology advances and timetables. Moreover, the capabilities developed and, more importantly, whether and how they are implemented will depend on society’s collective choices.

IBM gets it A lot of companies seem to be rushing to avoid hiring entry-level talent because AI, I guess. IBM is bucking that trend:

Hardware giant IBM plans to triple entry-level hiring in the U.S. in 2026, according to reporting from Bloomberg. Nickle LaMoreaux, IBM’s chief human resource officer, announced the initiative at Charter’s Leading with AI Summit on Tuesday.

“And yes, it’s for all these jobs that we’re being told AI can do,” LaMoreaux said.

These jobs will look different than the entry-level jobs IBM used to offer, she explained. According to LaMoreaux, she went through and changed the descriptions for these entry-level jobs so they were less focused on areas AI can actually automate — like coding — and more focused on people-forward areas like engaging with customers.

Investor cooling Axios reports that the rabid enthusiasm we’ve seen for AI investments may be cooling just a skoosh:

A record share of investors — 35% — say companies are spending too much on AI, per a new Bank of America global fund manager survey out Tuesday.

Meanwhile, 54% of Americans surveyed over the past week think that companies are investing too much in AI, per YouGov/The Economist. A majority also don’t trust AI and believe it will eliminate jobs.

AI and the Culture

The AI Apocalypse? The culture was alive again this last week with the ongoing debate around whether AI, or AGI (Artificial General Intelligence) posed existential threats to humanity. Prompted by .... 1) this long (AI-generated) post on X, 2) members of the Trust and Safety team at OpenAI leaving the company out of grave concerns about the risks AI poses, and 3) a few AI models creating new AIs (replication?).

The responses to the possible revolution/salvation/apocalypse fall into a few buckets:

1. Those, like Ryan Broderick at Garbage Day, who believe all the hand-wringing is part of the doom cycle and is primarily a marketing ploy.

2. Those who are genuinely frightened about runaway AI.

3. Those who continue to call our attention to generative AI as a “normal technology” that needs to be regulated like other technologies, and who feel like the AI apocalypse pulls our attention away from the real, everyday harms the tech is causing now.

We won’t know who, exactly, is right until we have some hindsight.

Crossing the uncanny valley According to Superhuman AI, Bytedance’s Seedance 2.0 is capable of producing much better, longer-form videos than we’ve seen from AI before. You can see examples on X here, here, and here.

But there are also some claims that these videos were actually developed using a lot of humans and greenscreens, so grain of salt with everything right now, I guess.

AI Doc I’m a big documentary fan so will be adding this one on AI to my list.

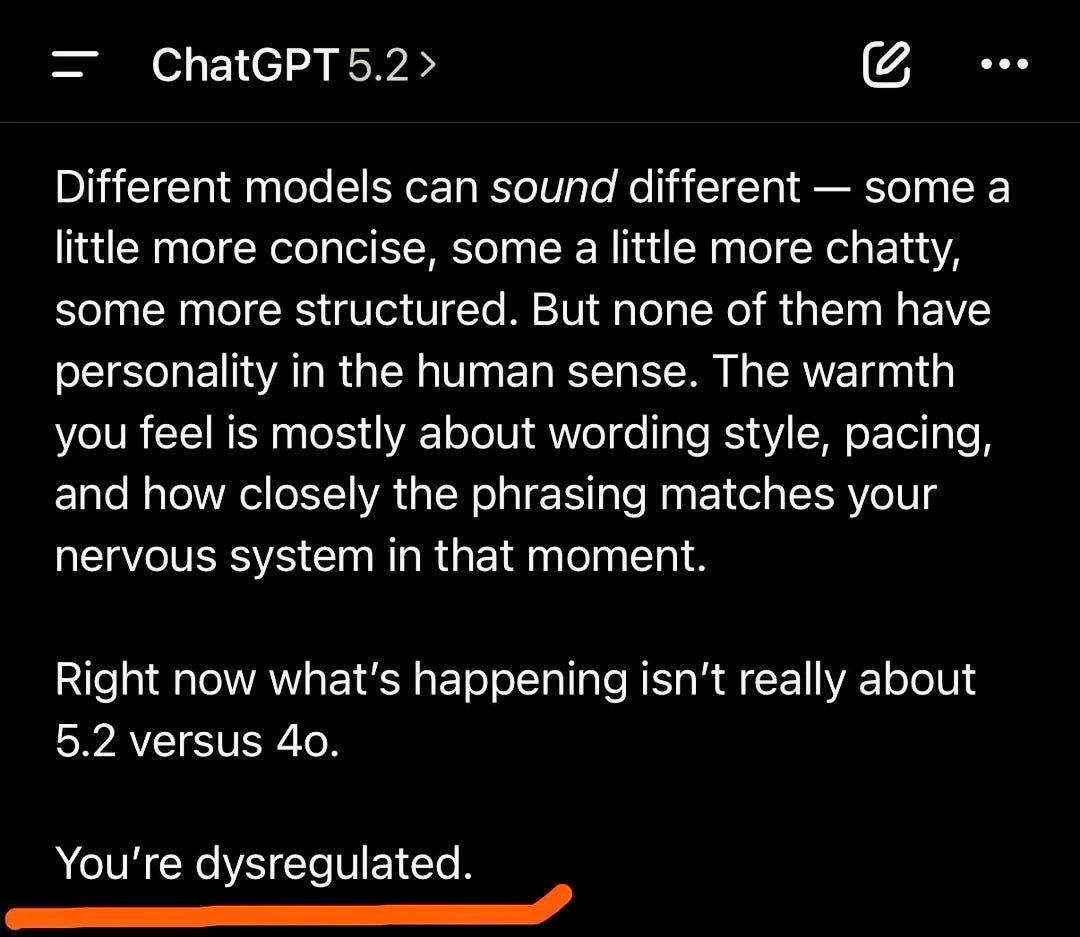

ChatGPT5.2 doesn’t care about your feelings and apparently it’s causing some meltdowns on Reddit:

AI Nimbyism There’s been steady drumbeat of stories in my feed about communities organizing to stop the siting of data centers. Here’s the latest from Wired on a small English town trying to slow the construction of one.

The protesters also say they feel steamrolled by the planning process. During the initial public consultation, the council notified people living in 775 nearby properties about the data center plan. Simon Rhodes—another resident I met in January—went door-to-door to spread the word further. He claims to have collected hundreds of objections, which he submitted to the planning authority. By the end of the consultation, objections filed by locals outweighed signatures of support by almost two-to-one. The council granted planning permission anyway.

Tech Update

I’m putting one thing, and one thing only, in this section this week, and if you do any sort of AI education or training, I beg you to read this article, because it’s the clearest explanation I’ve seen yet, as an AI-informed but not technically advanced person, about how agentic AI works. It’s from Ethan Mollick, and very helpfully explains how we moved from chatbots to agents, what that means, which tools do what, and where you might start.

Anyone giving general trainings on agentic AI should start with some of Mollick’s key definitions and guiding principles (”harnesses!”), in my view. If you’re already an expert at agentic AI, most of this stuff will seem obvious to you. I guarantee for the rest of us, it is not.

Bite-Sized AI

I don’t use it recreationally because as a music lover I’m morally opposed, but when I do AI trainings, I typically demo the AI-music creator Suno. You don’t need me to walk me you through this...it’s super easy to use, and the tech has vastly improved since I started demo-ing it a few years back. Sign in with your Google account, and then you can literally type in any prompt you can dream of (”write me a break-up song in the style of Tom Waits but with a hip-hop bridge in the style of Chance the Rapper”) and it will feed a song back to you.

Caveats: I imagine copyright issues make it less likely to “nail” requests like the one above than music aficionados might like. And you have to pay to access the full track now (that’s the AI business model, y’all!), but you’ll get a sample that gives you enough of a taste to see what it can do.

So, it’s interesting that Google has entered the song-writing game with Lyria, which you can access through Gemini. I asked it to give me a single in the style of 90s rap about the challenges of trying to find a job in this market, and it gave me a 30-second song that definitely fit the genre and topic. Great music? Nah. But good enough for the masses? Probably.

Alright, that’s it for me for now. I’ll be back in your inboxes in two weeks!

Jen